Western perspectives on technology tend to dominate the media, despite the fact that technology impacts on people’s lives are nuanced, diverse and contextually specific. At our March 8 Technology Salon NYC (hosted by Thoughtworks) we discussed how structural issues in journalism and technology lead to narrowed perspectives and reduced nuance in technology reporting.

Joining the discussion were folks from for-profit and non-profit US-based media houses with global reporting remits, including: Nabiha Syed, CEO, The Markup; Tekendra Parmar, Tech Features Editor, Business Insider; Andrew Deck, Reporter, Rest of World and Vittoria Elliot, Reporter, WIRED. Salon participants working for other media outlets and in adjacent fields contributed to our discussion as well.

Power dynamics are at the center. English language technology media establishments tend to report as if tech stories begin and end in Silicon Valley. This affects who media talks and listens to, what stories are found and who is doing the finding, which angles and perspectives are centered, and who decides what is published. As one Salon participant said, “we came to the Salon for a conversation about tech journalism, but bigger issues are coming up. This is telling, because, no matter what type of journalism you’re doing, you’re reckoning with wider systemic issues in journalism… [like] how we pay for it, who the audiences are, how we shift the sense of who we’re reporting for, and all the existential questions in journalism.”

Some media outlets are making an intentional effort to better ground stories in place, cultural context, political context, and non-Western markets in order to challenge certain assumptions and biases in Silicon Valley. Their work aims to bring non-US-centric stories to a wider general audience in the US and abroad and to enter the media diet of Silicon Valley itself to change perspectives and expand world views using narrative, character, and storytelling that is not laced with US biases.

Challenges remain with building global audiences, however. Most publications have only a handful of people focusing on stories outside of their headquarter country. Yet “in addition to getting the stories – you also have to build global and local networks so that the stories get distributed,” as one person said. US media outlets don’t often invest in building relationships with local influencers and policy makers who could help to spread a story, react or act on it. This can mean there is little impact and low readership, leading to decision makers at media outlets saying “see, we didn’t have good metrics, those kinds of stories don’t perform well.” This is not only the case for journalism in the US. An Indian reader may not be interested in reading about the Philippines and vice versa. So, almost every story needs a different conceptualization of audience, which is difficult for publications to afford and achieve.

Ad-revenue business models are part of the problem. While the vision of a global audience with wide perspectives and nuance is lofty, the practicalities of implementation make it difficult. Business models based on ad revenue (clicks, likes, time spent on a page) tend to reinforce status quo content at the cost of excluding non-Western voices and other marginalized users of technology. Moving to alternative ways to measure impact can be hard for editors that have been working in the for-profit industry for several years. Even in non-profit media, “there is a shadow cast from these old metrics…. Donors will say, ‘okay, great, wonderful story, super glad that there was a regulatory change… but how many people saw it?’ And so there’s a lot of education that needs to happen.”

Identifying new approaches and metrics. Some Salon participants are looking at how to get beyond clicks to measure impact and journalism’s contribution to change without committing the sin of centering the story on the journalist. Some teams are testing “impact meetings,” with the reporting team looking at “who has power – Consumers? Regulators? Legislators? Civil society? Mapping that out, and figuring out what form the information needs to be in to get into audiences’ hands and heads… Cartoons? Instagram? An academic conversation? We identify who in the room has some power, get something into their hands, and then they do all the work.”

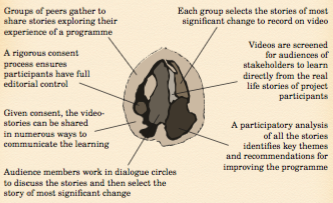

Another person talked about creating Listening Circles to develop participatory and grounded narratives that will have greater impact. In this case, journalists convene groups of experts and people with lived experiences on a particular topic to learn who are the power brokers, what key topics need to be raised, what is the media covering too much or too little of, and what stories or perspectives are missing from this coverage. This is similar to how a journalist normally works — talking with sources — except that the sources are in a group together and can sharpen each other’s ideas. In this sense, media works as a convener to better understand the issue and themes. It makes space for smaller more grounded organizations to join the conversations. It also helps media outlets identify key influencers and involve them from the start so that they are more interested in sharing the story when it’s ready to go. This can help catalyze ongoing movement on the theme or topic among these organizations.

These approaches look familiar to advocacy, community development, communication for development and social and behavior change communication approaches used in the development sector, since they include an entryway, a plan for inclusion from the start, an off ramp and hand over, and an understanding that the media agency is not the center of the story but can feed extra energy into a topic to help it move forward.

The difference between journalism and advocacy has emerged as a concern as traditional approaches to reporting change. Participatory work is often viewed as being less “objective” and more like advocacy. “Should journalists be advocates or not?” is a key question. Yet, as noted during the Salon discussion, journalists have always interrogated the actions of powerful people – e.g., the Elon Musks of the world. “If we’re going to interrogate power, then it’s not a huge jump to say we want to inform people about the power they already have, and all we’re doing is being intentional about getting this information to where it needs to go,” one person commented.

‘Another Salon participant agreed. ‘If you break a story about a corrupt politician, you expect that corrupt politician to be hauled before whatever institutions exist or for them to lose their job. No one is hand wringing there about whether we’ve done our jobs well, right? It is when we start to take active interest in areas that are considered outside of traditional media, when you move from politics and the economy to technology or gender or any of these other areas considered softer’, that there is a sense that you have shifted into activism and are less focused on hard-hitting journalism.” Another participant said “there’s a real discomfort when activist organizations like our work… even though the idea is that you’re supposed to be creating impact, but you’re not supposed to want that activist label.”’

Another Salon participant agreed. ‘If you break a story about a corrupt politician, you expect that corrupt politician to be hauled before whatever institutions exist or for them to lose their job. No one is hand wringing there about whether we’ve done our jobs well, right? It is when we start to take active interest in areas that are considered outside of traditional media, when you move from politics and the economy to technology or gender or any of these other areas considered ‘softer’, that there is a sense that you have shifted into activism and are less focused on hard-hitting journalism.” Another participant said “there’s a real discomfort when activist organizations like our work… Even though the idea is that you’re supposed to be creating impact, you’re not supposed to want that activist label.”

Identity and objectivity came up in the discussion as well. “The people who are most precious about whether we are objective tend to be a cohort at the intersection of gender, race, and class. Upper middle class white guys are the ones who can go anywhere in the world and report any story and are still ‘objective’. But if you try and think about other communities reporting on themselves or working in different ways, the question is always, ‘wait, how can that be done objectively?’”

A Pew Research Poll in 2022 found that overall,76% of journalists in the US are white and 51% are male. In science and tech beats, 60% of political reporters and 58% of tech journalists are men, and 77% of science and tech reporters are white, 7% Asian, 3% Black, and 3% Hispanic. Some Salon participants pointed out that this is a human resource and hiring problem that derives from structural issues both in journalism and the wider world. In tech reporting and the media space in general, those who tend to be hired are English speaking, highly educated, upper or upper middle class people from a major metropolitan area in their country. There are very few, media outlets that bring in other perspectives.

Salon participants pointed to these statistics and noted that white, US-born journalists are considered able to “objectively” report on any story in any part of the world. They can “parachute in and cover anything they want.” Yet non-white and/or non-US-born and queer journalists are either shoehorned into being experts for their own race, gender, sexual orientation or ethnicity./national identity or seen as unable to be objective because of their identities. “If you’re an English speaking, educated person from the motherland, [it’s assumed that] your responsibility is to tell the story of your people.”

In addition, the US flattens nuance in racism, classism, and other equity issues. Because the US is in an era of diversity, said one Salon participant, media outlets think it’s enough to find a Brown person and put them in leadership. They don’t often look at other issues like race, class, caste or colorism or how those play out within communities of color. “You also have to ask the question of, okay, which people from this place have the resources, the access to get the kind of education that makes them the people that institutions rely on to tell the stories of an entire country or region. How does the system reinforce, again, that internal class dynamic or that broader class and racial dynamic, even as it’s counting for ‘diversity’ on the internal side.”

Waiting for harm to happen. Another challenge raised with tech reporting is the tendency to wait until something terrible happens before a story or issue is covered. News outlets wait until a problem is acute and then write an article and say “look over here, this is happening, isn’t that awful, someone should do something,” as one Salon participant said. The mandate tends to be to “wait until harm is bad enough to be visible before reporting” rather than reducing or mitigating harm. “With technology, the speed of change is so rapid – there needs to be something beyond the horse-race journalism of ‘here’s some investment, here’s a new technology, here’s a hot take and here’s why that matters,’. There needs to be something more meaningful than that.”

Newsworthiness is sometimes weaponized to kill reporting on marginalized communities, said one person. Pitches are informed by the subjectivity and lived experiences of senior editors who may not have a nuanced understanding of how technologies and related issues affect queer communities and/or people of color. Reporters often have to find an additional “hook” to get approval to run a story about these groups or populations because the story itself is not considered newsworthy enough. The hook will often be something that ties it back to Silicon Valley — for example, a story deemed “not newsworthy” might suddenly become important when it can be linked to something that a powerful person in tech does. Reporters have to be creative to get buy in for international stories whose importance is not fully grasped by editors; for example, by pitching how a story will bring in subscriptions, traffic, or an award, or by running a US-focused story that does well, and then pitching the international version of the story.

Reporting on structural challenges in tech. Media absolutely helps bring issues to the forefront, said one Salon participant, and there are lots of great examples recently of dynamic investigative reporting and layered, nuanced storytelling. It remains difficult, however, to report on structural issues or infrastructure. Many of the harms that happen due to technology need to be resolved at the policy, regulatory, or structural level. “This is the ‘boring’ part of the story, but it’s where everything is getting cemented in terms of what technology can do and what harms will result.”

One media outlet tackled this by conducting research to show structural barriers to equity in technology access. A project measured broadband speeds in different parts of cities across the US during COVID to show how inequalities in bandwidth affected people’s access to jobs, income and services. The team joined up with other media groups and shared the data so that it could reach different audiences through a variety of story lines, some national and some local.

The field is shifting, as one Salon participant concluded, and it’s all about owning the moment. “You must own the choices that you’re making…. I do not care if this thing called journalism and these people called journalists continue to exist in the way that they do now… We must rediscover the role of the storyteller who keeps us alive and gives meaning to our societies. This model [of journalism] was not built for someone like me to engage in it fully, to see myself reflected in it fully. Institutional journalism was not made for many of people in this room. It was not made for us to imagine that we are leaders in it, bearers of it, creators of it, or anything other than just its subjects in some sort of ‘National Geographic’ way. And that means owning the moment that we’re in and the opportunities it’s bringing us.”

Technology Salons run under Chatham House Rule, so no attribution has been made in this post. If you’d like to join us for a Salon, sign up here. If you’d like to suggest a topic or provide funding support to Salons in NYC please get in touch!